This is the first release of silviculture! Note that this is alpha-quality software, and might do anything, including releasing hordes of angry beavers that eat all of your nice trees.

Silviculture uses Y.js along with codemirror to support realtime collaborative editing of all of the trees in a forest.

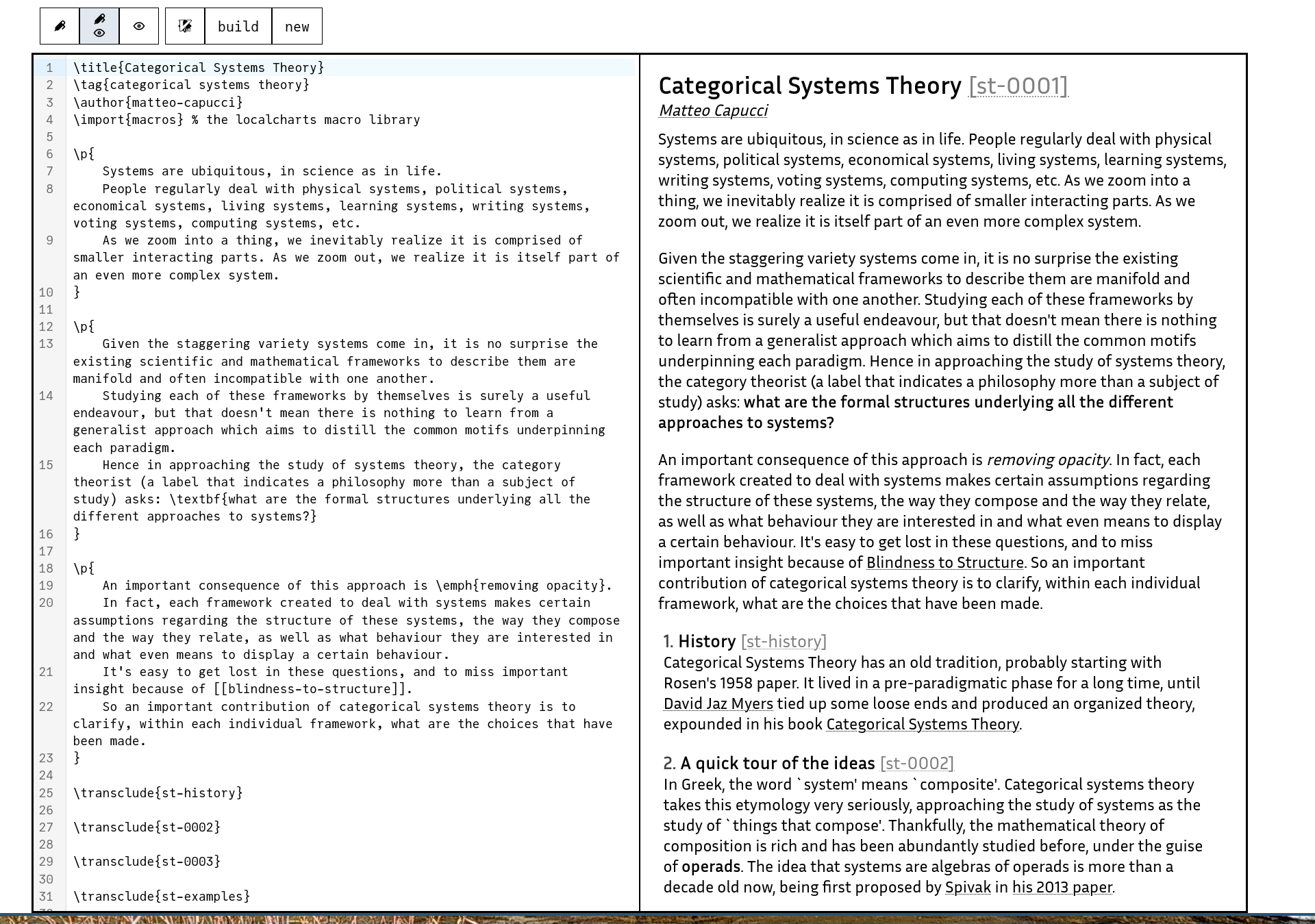

Silviculture works with just an editor, just a viewer, or a vertical split-screen editor and viewer combo, similar to overleaf or hedgedoc.

Instead of the preview pane, one can instead pull up a quick reference card for forester syntax and silviculture shortcuts.

By pressing Ctrl-s, Ctrl-enter, hitting the build button, or using :w in vim mode, you can ask the server to rebuild the forest, wrapping forester build. Thankfully because forester is so fast this normally only takes a second or two! This also refreshes the preview pane of any other connected clients to show the latest build.

Errors from forester or from LaTeX are propagated into the web app and replace the preview pane.

If you click on a link to another tree in the preview pane, it opens up an edit view on that tree. Additionally, Ctrk-k works as it does in a normal forest, opening up a menu to rapidly jump between trees by search.

Hitting Ctrl-i opens up a menu to quickly create a new tree and transclude it in the current tree, wrapping forester new.

A killer feature for some, irrelevant and optional for others.

I recently had the precise distinction between graphical and structural causal models explained to me by Jeremy Zucker and I figured out how to express the differences and commonalities in a neat categorical way.

First of all, graphical and structural causal models share the same syntax, which I will call causal diagrams.

Typically, causal models are expressed as directed acyclic graphs (DAGs). When you draw a DAG, it might look something like a commutative diagram or a string diagram, however neither of these interpretations actually makes much sense for the purposes that causal models are used for.

Instead, one should think of the DAG for a causal model as a certain type of presentation for a free Markov category.

A directed acyclic graph consists of a set of vertices

v \notin \operatorname { \mathrm {pa}} (v) for allv \in V - Let

\leq be the preorder reflexively and transitively generated byv' \leq v ifv' \in \operatorname { \mathrm {pa}} (v) . Then\leq is in fact a partial order (i.e. ifv' \leq v andv \leq v' thenv = v' ).

Given a DAG

- For each

v \in V , a speciesv - For each

v \in V , a transition\mathbf {sample} _v with multisource\operatorname { \mathrm {pa}} (v) and multitarget\{ v \}

Given a DAG

If

Now, the natural thing to do with a free Markov category is to consider Markov functors into specific Markov categories. The simplest case of this gives us graphical causal models.

A graphical causal model consists of a DAG

In order to define structural causal models, we have to go slightly beyond Markov categories, however.

Let

Let

There is a functor

A structural causal model is a Markov bifunctor from

Let

Let

In other words, the affine geometric realization of

Given simplicial complexes

Suppose that

The statement of this theorem is then simply

Before we go onto prove this, note that it is fairly easy to come up with a counterexample if we did not have the condition in . Specifically, if

A simplicial complex consists of a set

A prolynomial is a category

This is a stub article, but I'd like to record some thoughts from a discussion I've been having with David Spivak.

A prolynomial is a category

For this current article, we will recall that lax double functors to

Thus, equivalently, a prolynomial is a category

Given a prolynomial

This has to be worked out in more detail; again this is a stub!

Intuitively, an SDE given by the following equation

In synthetic differential geometry, tangent vectors are maps

One model theory for synthetic differential geometry is in (sheaves on)

What if we could do a similar thing in stochastic land? There's an analogous "algebraic dual" to measurable spaces and probability distributions on them; von Neumann algebras and positive linear maps.

I propose that if we allow nilpotents in some suitably generalized version of von Neumman algebras, then a "stochastic tangent vector to a point" should be a positive linear map

Why should this make sense? Well, the dual condition to

Now, write

Well, the positive elements of

And so in particular, the above SDE fits into this, if we define

I am certainly not experienced enough at functional analysis that I can say with confidence that this will all work out and give the beautiful theory of SDEs of my dreams. But the fact that positivity for a map

James Fairbanks wants multi-domain multi-scale multi-physics. Well, in fact many people want this, but they don't explicitly know it. Kevin Carlson has been working on some of the categorical story for the "multi-domain" part in Multi-domain multi-physics?! and Solutions of multi-domain multi-physics problems.

In order to get the "multi-scale" part, however, we need to be able to "pull and push"

that "sends the middle vertex on the left to the middle of the triangle on the right".

There are several ways of solving this problem. One way is with left Kan extensions. Another is with the codensity monad. As fun as these are, we are going to take a practical approach.

First of all, let's assume that we are working with simplicial complexes instead of simplicial sets.

A simplicial complex consists of a set

We then define a geometric map in the following way.

Given simplicial complexes

To understand this condition, let us do some examples. Let

But this is not the only place we could send the vertices in

The relevant proposition to prove is the following. First we must give the following definition.

Let

In other words, the affine geometric realization of

Suppose that

The statement of this theorem is then simply

Before we go onto prove this, note that it is fairly easy to come up with a counterexample if we did not have the condition in . Specifically, if

This is brief note to record a neat observation I just had.

Let

Then if we construct the category of charts for

On the other hand, if we construct the category of lenses for

Thus, we get a double category where the objects are diagrams, the vertical arrows are lax diagram morphisms, the horizontal arrows are colax diagram morphism, and the squares are compatibility squares. I'm not sure if anyone's thought about this double category before; it seems kind of neat?

One question I have is that if we consider

The classical story for morphisms between systems as given in myers_Categorical_2023 requires a certain square to commute strictly. However, in the case of non-deterministic systems, which use morphims in the Kleisli category for the powerset monad, it makes sense to ask this square to be filled by a 2-cell.

Specifically, a closed nondeterministic discrete system is a set

However, the Kleisli category of the powerset monad is in fact a 2-category (or a poset-enriched category), because we can relate

How can we allow this in categorical systems theory?

Essentially, the idea is to generalize Definition 4.1.0.1 from myers_Categorical_2023 (see also Matteo's Categorical Systems Theory for more general context) in the following way. Instead of starting with an indexed category

Then, we build the double category of lenses and charts in the following way. The horizontal and vertical morphisms (i.e. the lenses and the charts) are precisely the horizontal and vertical morphisms that we would get if we postcomposed

For example, consider the following indexed 2-category.

Let

Then

We could also do the same thing for the non-empty powerset monad

Now, build a double category category of lenses and charts for the indexed 2-category for the non-empty powerset monad as described above. There is then a corresponding systems theory on this double category, built via the section

This systems theory allows us to have more morphisms between systems than we previously could. For instance, suppose that we have a closed system

On the other hand, a lax morphism (i.e. morphism in the systems theory for the indexed 2-category)

Let

Then

We could also do the same thing for the non-empty powerset monad

The purpose of this tree is to lay out a complete account of the 2-category of strict double operads. The intended definition for double operad can be succinctly termed as

- Show that

\mathsf { Cat } as a 1-category has pullbacks. - Define a monad

\mathsf { Fam } \colon \mathsf { Cat } \to \mathsf { Cat } . - Show that

\mathsf { Fam } \colon \mathsf { Cat } \to \mathsf { Cat } is a cartesian monad on\mathsf { Cat } . - Apply Higher operads, higher categories to define a double operad to be a

\mathsf { Fam } -multicategory. - Show that symmetric monoidal strict double categories correspond to representable double operads.

The 1-category

This is well known, but we will reprove it here for the sake of explicitness.

It suffices to show that

Product categories are well-known, and also it is clear that

So we must show the following:

- If we let

E \colon \mathscr {E} _0 \hookrightarrow U( \mathscr {C} ) be the equalizer ofU(f),U(g) in\mathsf { Graph } , then\mathscr {E} _0 picks out a subcategory of\mathscr {C} , which we will call\mathscr {E} . \mathscr {E} satisfies the universal property of equalizer.

We start with 1. We must show that

Now for 2., suppose that we have another category

In this tree, we will define a functor

We now define a functor

Equivalently, we may think of an object of

It is this viewpoint that makes the composition and identities of morphisms natural. That is, the identity on

We can visualize a morphism in

In this tree, we will define a functor

We now define a functor

Equivalently, we may think of an object of

It is this viewpoint that makes the composition and identities of morphisms natural. That is, the identity on

We can visualize a morphism in

Sam Staton, Paolo Perrone and I organized a small meeting between Topos Institute and the Oxford CS department. It was kind of like a workshop, but because of the short time frame that we planned it on and limited funding, we didn't invite all the people we'd like to invite or have it open to applications, so we called it a "meeting." Anyways, we managed to make some good progress on some open problems within categorical systems theory, and it was a lot of fun, so this is a short retrospective on what worked, what didn't work, and directions to pursue in the future.

The meeting was fairly loosely structured. We had two talks at the beginning, one from David Jaz on double categorical systems theory, and another from Sam Staton on LazyPPL. The rest of the time was spent on:

- Talking in small groups about math.

- Writing up what we talked about on the LocalCharts forest.

- Explaining things we talked about to the whole group.

I opened the meeting by asking participants to do three things.

- As much as possible, attempt to ground any new theory with concrete examples.

- Write things down on the forest on the day that you discussed them, so that you won't forget them, and so that people interested in the topics of the meeting who didn't attend the meeting wouldn't be too left out.

- Be comfortable with the idea that you might spend three hours teaching existing theory to people who aren't familiar with it yet. Transmitting knowledge is a good outcome of the workshop and not at all a waste of time.

The last request was I think the most successful idea; people were pretty happy at the end of the workshop about things that they had learned. For the second request, I tried to set aside time at the end of each day to write, but it was very tempting to continue conversations into this time instead of writing, and also it was somewhat hard to write at the end of the day, when everyone was tired from doing math all day and looking forward to dinner. The first request I think was a good idea, but very easy to forget when you are a category theorist! So I think that it's worth asking people to do in the future, even though we weren't very good at living up to it.

We gathered together things that people wrote at the workshop into Oxford-Topos Meeting 2024 - Outcomes. Some people ended up writing a lot, others none at all. I ended up not writing very much because I was hovering around helping people install forester. Hopefully in future events, everyone will have forester installed and be used to forester syntax before designated writing times.

So my dream of having all of the discussions captured on paper for those who weren't present didn't quite materialize. But fortunately I can talk a little bit now about some of the topics of the discussions that I participated in.

Note: I'm not putting the list of people in each discussion in case people wish to keep that private, but if you were in a discussion and you want to add your name, please just edit this!

I ended up in two discussions on port-Hamiltonian systems. Both of these discussions were somewhat one-sided, in that they mostly consisted of me explaining what I did in my masters thesis. I want to emphasize that I did not set out for this meeting to consist of me shilling for my own work, but it seemed like people were interested and enjoyed learning about it.

However, I was especially pleased that after I went through some of the big gaps in my thesis which had to do with my lack of knowledge of differential geometry: Paolo Perrone was kind enough to teach me some intuition about integrable forms. Specifically, the kernel of an integrable 1-form

Then, as far as I understand it, the idea Paolo was proposing was to replace the relations that I use in my thesis with something like forms which vanish on the relations. The problem that I was running into in my thesis is that, when thought of as relations, linear subbundles of vector bundles don't necessarily compose because of constant-rank issues. Perhaps moving to forms would allow me to talk about non-constant-rank linear subbundles? Anyways, I'm excited to investigate this direction, and not having a good intuition for integrable forms was something that had bothered me for a while so I was happy to learn about that.

Another group I participated in tackled the problem of stochastic behavior of dynamical systems. There is a good story for "representable behaviors" within categorical systems theory, but it was unknown how to generalize this to talk about behaviors of a Markov chain.

We were able to come up with a definition for representable stochastic behavior which mimicked the classical notion of "a stochastic process adapted to a filtration" using some techiniques from quasi-Borel spaces. I wrote up some preliminary notes on this here, but that does not capture where we ended up going on this topic, and hopefully there may end up being a paper on this.

Funnily enough, our group was originally interested in trying to make a categorical systems theory for stochastic differential equations, but we ended up getting sidetracked after we slogged through an hour of half-remembering functional analysis. There were some promising directions here that I hope we circle back around to though.

I was not in the group that discussed double operads, and in fact to a certain extent, I don't think it was a group, it was a one-man show of Kevin Arlin sitting down and grinding out higher category theory, and the result are here: kda-0003.

This was especially cool because in David Jaz's opening talk he said that he's wanted a good definition for double operad for years.

I think the lesson from this is that sometimes it's OK to have a group of 1! Working with other people can spark ideas that it is best to work out individually.

It was a lucky coincidence that Thorsten Altenkirch happened to have been scheduled to give a talk during the meeting, because I learned about the concept of observational type theory and higher observational type theory from this talk.

Or rather, what really happened is that Thorsten gave a talk, and then later on, David Jaz explained to me why it was really cool.

As far as I can understand, the idea of higher observational type theory is that each type constructor in a type theory (i.e. sigma, pi, etc.) should come along with a definitional equality for what the equality type on that type is equivalent to. For instance, equality for the universe type should be definitionally equal to isomorphism, so univalence becomes definitional instead of propositional.

This seems really cool to me, because it is exactly what I want for combinatorial type theory. Namely, if I write down a combinatorial type, I want to automatically compute a definition for identifications between two elements of that combinatorial type: I want to automatically derive from the definition of a graph a definition of graph isomorphism!

I also want to take this one step further: from the definition of a graph, I want to automatically derive a notion of edit of a graph!

Unfortunately, it seems like there hasn't been much published on Higher Observational Type Theory yet: it's being kept somewhat under wraps as it develops.

I think I only really need a fragment of the full power of Higher Observational Type Theory to do what I want, so David Jaz and I discussed some ways of doing HOTT "on the cheap".

But these were not all of the topics discussed at the meeting: these are only the topics that I know about and participated in! I encourage anyone who attended the meeting but didn't get much of a chance to write during the meeting to write up thoughts while the thoughts are still fresh, and if they feel comfortable, share those thoughts!

- When you are organizing something for academics, it's good to have a half-hour buffer at the beginning and tell people to show up at the beginning of it, so that when they inevitably show up late, the rest of the schedule doesn't have to be shifted.

- Keeping to a schedule is hard. But that's OK: you don't necessarily need to keep to a strict schedule in order to get things done!

- Small is powerful. Generally the most productive discussions involved 2-3 participants, even if there were more than 3 in the group. That being said, there is a balance between "everyone contributes" and "people who don't have the same level of experience get to be a fly on the wall and see how people with more experience handle a subject", and I think that sometimes it is more productive to slow down a discussion and keep everyone following, to accomplish the "learning things" objective. All that being said, I think that it is very hard to do math in a group of >5.

Overall, people said that they had a good time at the meeting, so I hope to do this again some time. I honestly think both the small discussion groups and the overall small number of people were both assets, and in fact the small number of days was also somewhat of an asset because it forced people to focus. So I think "scaling this up" doesn't look like inviting more people for a longer time: I think it looks like inviting different groups of people semi-frequently. Of course, this is only practical when the groups of people happen to be in the same place, but perhaps this is possible if small meetings like this can "piggyback" over other events like conferences. And I encourage other people to organize similar small events and not invite me, but still write up the results on localcharts: I think the ideal number of this kind of event is much larger than would be practical for me to attend!

In categorical systems theory, one of the great features is that trajectories are representable. That is, a trajectory of a certain system is a morphism from a special "clock system" into that system. Different choices of clock systems can give you different notions of trajectory, i.e. trajectory for all of

However, we don't yet have a good notion of trajectory for systems defined by stockastic kernels

Classically, such a trajectory is a collection of random variables

Or, in other words, for all

We were thinking about representing the data of a trajectory as a map

In order for this to work, commutativity of the above square needs to be equivalent to the condition above involving conditional expectation.

Two things that don't work are sending

The problem is that we need some notion of

David Jaz Myers proposed an interesting idea, which is to have a "review" taxon that shows up in a different section than backlinks. Then people can leave reviews by writing a tree with taxon "review" that links to the tree with which they find problems.

When the review is addressed, it can be marked as such.

One core requirement for Davidad's AI safety program is a very expressive logic for talking about the behavior of systems. By necessity, this must support a notion of probability.

However, the best tool for logic, topos theory, doesn't necessarily play very nicely with probability. How can we extend topos theory to deal with probability?

One idea to rectify this is to try and work out a theory of measurable topoi, analogous to the theory of measurable locales. This has been studied to some extent in the nlab page measurable+space#relation_to_boolean_toposes, which links A Sheaf Theoretic Approach to Measure Theory.

However, a geometric morphism between Boolean toposes is not stochastic. How can we get something like Markov kernels back into the picture?

Gelfand-type duality for commutative von Neumann algebras gives a Gelfand duality between commutative von Neumann algebras and measurable locales.

A Markov kernel between commutative von Neumann algebras is a linear map (with some other properties) -- i.e. it doesn't necessarily preserve multiplication. The paradigmatic example of this is that a map out of the singleton space (whose von Neumann algebra is just the complex numbers), corresponds dually to the expectation of observables, which is a linear map but does not preserve multiplication except for when two observables are independent according to the probability measure.

How can we extend this kind of map from locales to topoi?

Perhaps we can do the following. Define a "measurable topos" to be a topos

Then a Markov kernel from

Follow along at forest.localcharts.org/aria-0001.xml!

- Motivation

- Informal collaboration

- Formal collaboration

This is the source document for my talk at Davidad's second ARIA workshop in Birmingham on safe-by-design AI. From this document, I produce the pdf presentation slides and this HTML forester page.

Successful implementation of Davidad's program thesis over the next 3 years implies something like

- Several new fields worth of novel math research

> 1,000,000 lines of code

This is only possible if we either:

- Clone Urs and ekmett a couple of times and form them into an (aligned) borg-like mindmass, or

- Get really good at collaboration.

The code also needs to be correct and efficient.

- Informal technical writing: overleaf+arXiv

- Technical computing: github repositories containing arbitrary code

If you are really lucky, the github repository will be a maintained package installable through a package manager, and if you are really really lucky, the maintainer won't graduate in a year and forget about it.

- Papers: too slow, standalone.

- Blog posts: better as advertisements/summaries for a larger audience. Also usually fairly standalone.

- Slack/zulip: too fragmented, too short

- Roam Research: not designed for real

book-length mathematics. - nLab: past attempts to encourage people other than Urs to do novel math on the nLab have failed, unclear why

- Gerby (stacks project software): powers arguably one of the most successful giant mathematical collaborations in history. Not explorative, janky

- Forester... just might work??

I've thought about this a lot: see a more complete survey here.

- Monday morning (UK time): DJM writes down new definition

- Monday afternoon (EU time): Matteo adds some key lemmas

- Monday afternoon (Pacific time): Sophie spots hole

- Tuesday morning (EU time): A long-time lurker comments for the first time on idea for patching hole

- ...

- By Thursday night: Enough material for a paper

- Friday morning (UK time): Organize material into a paper by writing an abstract, collating background+new material into a reasonable order, and then exporting to arXiv-compatible LaTeX. All authors of transcluded material notified and given a chance to review.

- Friday afternoon (UK time): pub.

AND THEN WE DO IT AGAIN THE NEXT WEEK.

Forester takes a collection of files with TeX-like syntax and produces both a static website and LaTeX.

Key features:

- Transclusion

- Linking, backlinking, and citation

- Macros

- TikZ

\to SVG - Customizable LaTeX export

- Better error messages than LaTeX

Forester for the Woodland Skeptic is an introduction to forester for localcharts, and the official introduction is Build your own Stacks Project in 10 minutes. You can see the README

- Transclusion: the operad of writing

- Linking, backlinking, and citation: the best organization method known to humanity

- Macros: separation of intent from style

- TikZ

\to SVG: diagrams, diagrams everywhere - Customizable LaTeX export: the magic of XML

- Better error messages than LaTeX

Definitions, theorems, sections, references, etc. are all separate units (called trees) in Forester which can be freely included into other documents via transclusion, possibly recursively.

In addition to transclusion, you can also just link other pages, which will automatically show up as a link to, say, Definition 3.4 if it happens to be on the same page, or Definition [double-category] if not. Each tree records which other trees link to it, and displays those trees at the bottom.

Forester mimics the LaTeX macro system, and expands a single macro definition within text, inline math (which is displayed via KaTeX), and TikZ that gets compiled to svg. Macros also are tree-local rather than global to the whole forest, though one tree can import the macros from another tree.

Specifically, you can just copy-paste commutative diagrams from quiver into forester.

LocalCharts is live!

- Medium-sized forum

- Small but growing forester instance

- This talk built via forester

- Compatible with UK law for government projects

I have spent the last year (actually funnily enough starting with the first time I met Davidad) setting up the localcharts ecosystem, which includes a collaborative markdown editor, a discourse server, and an automated build system for forester which builds the localcharts forest from its git repository.

We have docs on how to use the localcharts forest.

And the whole system runs on (EU/UK)-hosted services, fit for use in a program sponsored by the UK government.

What does it mean to do math on the computer?

- Logician: propositions as types.

- Characteristic Algorithms: Martin-Lof type checking

- Programming languages: Isabelle, Lean, Coq, Agda

- Algebraist: Symbolic rewriting

- Characteristic Algorithms: Groebner bases, e-graphs

- Programming languages: Mathematica, Macaulay2, OBJ3, Z3

- Engineer/statistician: Numerical computing

- Characteristic Algorithms: Euler's method, MCMC, gradient descent

- Programming languages: Fortran, MATLAB, Julia

- To a logician, that means turning propositions into types, and proofs into terms of those types, in a language like Agda, Coq, Lean, etc. In this way of

doing math , however, the only algorithm is the type-checking algorithm. Of course, by the Curry-Howard correspondence constructive proofs are equivalent to functions, and those functions might be interesting algorithms. But there is a significant difference between the use cases offormal verification of an algorithm andproof by typechecking. It's nice to formally verify your algorithms, but when your task iswrite an algorithm for a task , the constraint that your program has to also be a proof of that program's correctness slows things down. And there are many algorithms other than the typechecking algorithm that we care about. For instance... - To an algebraist, doing math on a computer means

using a variety of algorithms to rewrite symbolic equations. One could see this as tactics for proof, but I have yet to see aGroebner base tactic or ane-graph tactic implemented in a proof assistant. It could be an interesting research task to build such a proof assistant, but this is somewhat of a distraction when prototyping new symbolic algorithms. And additionally, the task ofwriting a tactic engine is significantly enough different fromwriting proofs using that tactic engine that it's worth thinking about as adifferent way of doing math on the computer . Classically computer algebra is often done in in dialects of LISP, or special-purpose solvers written in high-performance languages like C++; we might want to do it in Rust or Julia. But that is not the extent of math on a computer. - To an analyst,

math on a computer means numerical methods. In the glorious future, we will have numerical methods written in Lean that compile to the GPU. But we are not yet in the glorious future. Especially for experimentation, we will want to use languages suited for high-performance which classically meant Fortran or C, but now includes Julia, Rust,the ecosystem of prewritten Fortran/C/C++ that has python wrappers, and Haskell DSLs that compile down to Fortran/C/C++/CUDA (which is how Ed Kmett 100xed state of the art performance for ray tracers).

Dilemna:

- Don't want to rewrite tensorflow

- Don't want to write everything in Python

Solution: Models should be language-independent

Algorithms are

We don't want to rewrite tensorflow, but we also don't want to write everything in python.

Eventually, we should just develop a language which

Even if we manage to build the next great mathematical programming language, it will still be good to have language-independent models, because we aren't going to convince the entire world to use a single programming language, but we want to have safe AI for the entire world.

Fortunately, we have Categorical Systems Theory, which tells us that a model is an initial algebra in some doctrine of systems. Elements of this initial algebra are just data that is independent of any particular computational paradigm.

Of course, in order to implement all the operations of categorical systems theory, we will need to write functions for editing, composition, simplification, analysis, simulation, etc. These will operate on language-independent data, but will not themselves be language-independent data. Ideally, we write basic operations like editing, composition, simplification, etc. in a single language (Rust perhaps), and then offer some kind of ffi.

What can be cross-language?

- Algebraic Data Types

- Generic types

- Multidimensional Arrays

- Presentations of algebraic structures (i.e. ring presentations by generator and relations).

- Knowledge bases, i.e. collections of

facts in the style of prolog.

What can't?

- Arbitrary functions

- Arbitrary dependent types

- Type theory for data

- Embed into existing languages

- Build storage system

- Make a type theory expressive enough for the models we want, and such that all well-formed types

make sense to serialize cross-language . I have some clever ideas for how to do this that I won't go into right now, but can be found (links) - Write a compiler that compiles type definitions to produce wrapper types in all relevant languages, similar to protobuf.

- Build systems for storing serialized models that can be accessed programmatically.

It would look something like:

struct Graph {

V: fintype;

E: fintype;

src: E -> V;

tgt: E -> V;

};

- Structured version control for models?

- Version control the source of truth, which could be

- The model itself

- Stochastic model search + random seed

- Textual DSL

- A composition diagram with other models inserted

- Everything downstream of source of truth: deterministically cache

- Nix is current state-of-the-art.

Originally, the idea was that we would version-control this data, directly. I still think that this is a good idea, but I think that there is a larger picture. Namely, you should version-control the

The state-of-the-art current system for caching the results of arbitrary computation is Nix. It is not the most ideal platform for a variety of reasons, including speed, but we have to at least learn from how it works if we want to do better.

We can hack version control of structured data into Nix via hashing the

Interestingly enough, this would also be keyed on the hash of the versioning software, so every update to the versioning software would also cause all caches to be invalidated, and patches applied from scratch. Fortunately this is not too expensive: git does this every time you clone.

Another sidenote: the difference between nix and content-addressed stores is that content-addressed stores are keyed by the hash of the thing that is stored, while nix is keyed by the hash of the inputs to a (more or less) pure function (the nix evaluator).

- I thought literate programming is dead... but is it?

- Rustdoc is literate programming

- 1lab is literate programming

- PBRT is literate programming

- Jupyter is literate programming

- Caching enables principled notebook computing

- Explainable AI involves AI... and explanations!

Literate programming has a long history, starting with Knuth's implementation of TeX. One might argue that the traditional noweb style of literate programming has died out to some extent. But to a certain extent, if you consider notebooks or "documentation generators" like rustdoc to be literate programming, then literate programming is still going very strong.

Also, the 1lab is a really interesting project: it combines formal mathematics with informal mathematics via literate agda.

One of the Achilles heels of writing scientific documents as, say, Jupyter notebooks is that caching is hard. If you want a live-refreshing preview, then you can't rerun the notebook from scratch on every change. But if you want to avoid issues related to out-of-order cell execution, you have to rerun the notebook from scratch. And if you want to build notebooks in CI, then not having a cache system can mean >30 minute CI times, which is a massive drain on productivity.

However, if your scientific system is built from the ground up to have fine-grained caching, including for simulation runs and figures, then it becomes practical to offer a live-reloading compilation server for your document, which only reruns cells when necessary. And by cache-sharing, someone else can edit your document without having to run your (potentially extremely expensive) simulations. You can also delegate computation to server farms and just pull in the result (link to post on this)

Finally, a significant part of scientific modeling is informal explanation! Human language should be a first class citizen in the modeling workbench.

- Success requires scaling our development via more effective collaboration

- Informal collaboration needs to scale beyond

couple of mathematicians write standalone paper - Formal collaboration needs to scale beyond

software package for single type of model in a single language - Next steps: intertypes

We shape technology for public benefit by advancing sciences of connection and integration.

-- Topos Institute

I will be taking notes on repl.it during the Davidad's second ARIA Workshop. If you would like to join my collaborative session in a google-docs-like fashion, reach out to me either via DM on localcharts or via the category theory zulip, or via any other means you have at your disposal.

You can see the live-updating notes here, but note that the lifespan of that URL is unclear.

ARIA has a motto of "people then projects". They believe in individuals over committees, but they also recognize the importance of long-term projects.

Davidad is running a program based on his thesis about an opportunity space.

Formal verification and AI are both large areas, but they are not yet combined within a single field.

We are starting with TA1.1, which is theory. So likely this program will be somewhat "staggered": we will go from theory to implementation.

The goal is safe-by-design AI, and the key to safe-by-design AI is formal world models. If we can get the AI to understand the world formally and explain their reasoning/plan formally, then we can use non-AI tools to reason formally about the actions of the AI.

We may have very strong AI, but it would be isolated in a box.

Emmet Shear had an interesting thread recently about the mythological analogues to AI, and how we shouldn't try to enslave AI. I wonder if we can phrase this as "a wonderful gift to the AI; beautiful puzzles to solve."

If we can formalize world models in this way, then we are much better at science in general, maybe that makes the AI happy? Anyways, this is not the main thread of the program.

Compositional Probabilistic Model Checking with String Diagrams of MDPs successfully scales to POMDPs with billions of states! Very cool.

Infinite-dimensional port-Hamiltonian systems are a subject of interest! markus-lohmayer will be happy. Also see this and this.

Different flavours of compositionality: transition between systems might be only possible in certain states of the system? Not totally sure what this means.

Colimit composition: gluing together state spaces. Limit composition: imposing relations on a-priori independent systems.

I wonder, is this just "colimits in syntax, limits in semantics"? Perhaps there are also "colimits in semantics", would this correspond to limits in syntax?

Reconfigurable port-Hamiltonian systems. Motivation: we want to model continuous-time interaction between dynamically created and destroyed entities. He screenshotted my thesis!

We want to think about the interaction between non-normalization of distributions, as is often used computationally, and infradistributions (i.e. convex sets of distributions)?

Is V-Rel or V-Prof a "big tent" for semantics. Different models all eventually should relate within a single world.

Final words: the important thing is to give the AI an expressive language for talking about the world. It doesn't matter so much if proof search within that expressive language is practical by traditional means.

You may not like it, but this is what peak chat performance looks like

-- Eigil Rischel

Hello Eigil, can you see this? Try typing something?

Hi Mike! Try typing something to see if it works.

Yeah, I can see it -E

HI Owen! From Mike! I'm taking notes on digital paper - I prefer handwriting for notes - But thanks for the invite here!!

Makes sense! Well, I'm glad that the repl.it successfully supports at least three people.

Is there a way to typeset this and see the results?

Yes: https://fe4c8d58-a033-4576-8a20-909cc6f47a50-00-jxvz73lbppio.spock.replit.dev/ocl-001K.xml

This link still doesn't work - even with the `l` but no big deal - I was just curious to see the typeset results

That's frustrating!! Maybe it has to do with cookies on my computer??

Yeah maybe - no big deal

Huh, it works on my phone.

"site can't be reached" for me - oh well

Very strange.

@Eigil, how about you, can you see it?

Yeah it works for me.

Huh, weird.

Owen talks about the type signature of different data structures.

You can turn a dependent type into a data specification - but only if the types which things depend on are restricted to be finite types, and you only ever have function types where the domain is one of these finite types.

An element of such a type basically amounts to a database, a set of rows and columns

The idea is that this data language is what goes inside all the arrows in Davidad's big diagram describing his program.

- https://onnx.ai/

- https://flatbuffers.dev/

- https://github.com/pyprob/ppx

ONNX is storage for models, PPX is live-communication protocol between running programs. Which paradigm do we want? Maybe both?

Owen has been envisioning this as a format for model interoperability, but perhaps also good as a format for "recording things we've learned in semantics."

The most condensed explanation of logic programming is that it's normal programming, except you get to make "non-deterministic choices" and you succeed if any of the choices "are good".

For instance, suppose that you suspect there might be an alligator behind some of five doors. Normally, you have to choose one of the doors, and if there is an alligator behind it, you die. But in logic programming, you get to choose all of the doors, and if any of the doors doesn't have an alligator, then you live.

One way of implementing logic programming is just with for loops. For instance, in Julia one could write

for door in doors

if !hasalligator(door)

return :alive

end

end

return :dead

This has the disadvantage that if you want to make multiple choices, you have to nest the for-loops. For instance, maybe you have a chance when you open the door to dodge left or right, and if the alligator lunges in the opposite direction, then you are safe. This would be written as:

for door in doors

if !hasalligator(door)

return :alive

end

for direction in [:left, :right]

if gator_direction(door) != direction

return :alive

end

end

end

return :dead

If you have to make too many choices, then your code will go off the right side of the screen. Logic programming gives a "magical" choose operator with which we can rewrite the above to look like this:

door = choose(doors) if !hasalligator(door) return :alive end direction = choose([:left, :right]) if gator_direction(door) != direction return :alive end _ = choose([])

Now we represent failure by choosing from an empty list: nothing past that line can possibly run. This code above doesn't run in Julia (unless you do some fancy meta-programming perhaps). However, we can rewrite this in Haskell using the List monad to something like the following

do

door <- doors

if !(hasalligator door)

then return Alive

else do

direction <- [Left, Right]

if (gator_direction door) != direction

then return Alive

else []

A more serious example of logic programming is graph homomorphism finding (see here for a longer introduction to this subject). Suppose that we have two graphs

With logic programming, we can write a (probably inefficient) algorithm to find graph homomorphisms in the following way.

For each vertex in

The trick to efficient use of logic programming is to "fail as fast as possible". I.e., every time we make a choice, we want to figure out as soon as possible if no future choices will lead to success, and ideally never even have to run those loops. This is known as "pruning"; eliminating whole branches of the search tree before we ever have to reach their leaves.

There are a wide variety of languages and libraries that implement logic programming, but I will only cite a couple for now: Backtracking, Interleaving, and Terminating Monad Transformers, Picat. Also see this blog post.

This is an exposition of a technique for logic programming that makes use of continuation passing. If you don't know what either of these things are, don't worry! I'll explain both on the way.

The most condensed explanation of logic programming is that it's normal programming, except you get to make "non-deterministic choices" and you succeed if any of the choices "are good".

For instance, suppose that you suspect there might be an alligator behind some of five doors. Normally, you have to choose one of the doors, and if there is an alligator behind it, you die. But in logic programming, you get to choose all of the doors, and if any of the doors doesn't have an alligator, then you live.

One way of implementing logic programming is just with for loops. For instance, in Julia one could write

for door in doors

if !hasalligator(door)

return :alive

end

end

return :dead

This has the disadvantage that if you want to make multiple choices, you have to nest the for-loops. For instance, maybe you have a chance when you open the door to dodge left or right, and if the alligator lunges in the opposite direction, then you are safe. This would be written as:

for door in doors

if !hasalligator(door)

return :alive

end

for direction in [:left, :right]

if gator_direction(door) != direction

return :alive

end

end

end

return :dead

If you have to make too many choices, then your code will go off the right side of the screen. Logic programming gives a "magical" choose operator with which we can rewrite the above to look like this:

door = choose(doors) if !hasalligator(door) return :alive end direction = choose([:left, :right]) if gator_direction(door) != direction return :alive end _ = choose([])

Now we represent failure by choosing from an empty list: nothing past that line can possibly run. This code above doesn't run in Julia (unless you do some fancy meta-programming perhaps). However, we can rewrite this in Haskell using the List monad to something like the following

do

door <- doors

if !(hasalligator door)

then return Alive

else do

direction <- [Left, Right]

if (gator_direction door) != direction

then return Alive

else []

A more serious example of logic programming is graph homomorphism finding (see here for a longer introduction to this subject). Suppose that we have two graphs

With logic programming, we can write a (probably inefficient) algorithm to find graph homomorphisms in the following way.

For each vertex in

The trick to efficient use of logic programming is to "fail as fast as possible". I.e., every time we make a choice, we want to figure out as soon as possible if no future choices will lead to success, and ideally never even have to run those loops. This is known as "pruning"; eliminating whole branches of the search tree before we ever have to reach their leaves.

There are a wide variety of languages and libraries that implement logic programming, but I will only cite a couple for now: Backtracking, Interleaving, and Terminating Monad Transformers, Picat. Also see this blog post.

The question now becomes: how does one implement logic programming in a language like Julia, which doesn't have monads? One way is to implement a virtual machine that supports non-deterministic choice. This is essentially how we got a 10x speedup for homomorphism finding in Catlab. This is also how the e-matching algorithm works in egg. However, this is not very flexible, and somewhat tedious, involving stuff like register allocation. A more elegant way to do this is via continuation passing. I learned this technique from reading the Metatheory source code, but it is a quite old technique; Alessandro (the author of Metatheory) says that he learned it from Gerald Sussman.

We first review the basics of continuation-passing style.

Continuation passing is a fundamental rethinking of control flow. In normal programming, a function returns a value, and the rest of the code gets to decide what to do with that value. In continuation-passing style, you pass the "rest of the code" in as an argument to the function, and the function gets to decide what to do with the rest of the code. The "rest of the code" is known as the continuation.

If you've ever written javascript with callbacks, you should be familiar with this idea. The way that you might want to make a request to a server is something like:

const response = makeRequest(url, data) // do stuff with response

However, the way that this is typically done in javascript is:

makeRequest(url, data, function(response) {

// do stuff with response

})

The reason for this is that javascript is single-threaded, so in the first piece of code if waiting for the response to come back takes a long time, nothing else can run. The second piece of code instead sends off the request, registers the "callback" to be run when the response eventually comes back, and returns, letting other code run.

This style of doing things created the infamous situation known as "callback hell", where a complex sequence of actions ended up going far off the side of the screen, as callbacks were nested inside callbacks inside callbacks ad naseum.

Fortunately, in a good language one can automatically transform code written in the first style into code written in the second style, so that you can have the readability benefits of the first with the performance of the second. This is the point of cats-effect and the companion library cats-effect-cps (cps stands for "continuation passing style").

Now we can explain how continuation-passing is used for logic programming.

The secret to Logic Programming via Continuation Passing is to call the continuation multiple or no times. If we transform

x = choose([1,2,3]) y = choose([x, x*2]) ...

into

choose([1,2,3], x -> choose([x, x*2], y -> ...))

then it becomes super clear how to implement choose!

function choose(xs, f)

for x in xs

f(x)

end

end

This is the basic idea, but there is more to say (to be continued).

This is just a quick note to write down a notion of editable stuff types (see also stuff calculus). To be refined later.

Normally, a stuff type is a functor from

If we wanted to version control a graph, we would want to be able to add or delete vertices (and also edges). We can model editing finite sets by using

Then, an editable stuff type is a lax double functor

The stuff calculus story should then extend to editable multivariate stuff types.

What I don’t understand about Forest (and what is keeping me from just go ahead and write everything there) is the syntax… why a new one? Why not Markdown or LaTeX or a reasonable hybrid like the nLab?

-- Matteo Capucci

This is a tutorial aimed at people who want to get started writing on the LocalCharts forest, but don't know how forester works/are frustrated at how forester is different from tools they may be used to. This is intended to be a direct introduction than the official tutorial (which has more motivation for forester), and also has some localcharts-specific content.

Forester is a tool for writing large quantities of professional quality mathematics on the web. To support large quantities, it has extensive interlinking/backlink/transclusion support. To support professional quality, it has a uniform, LaTeX-like syntax with macros that work the same in prose, KaTeX-based math equations, and LaTeX-produced figures.

An example forester document showcasing basic features looks like this:

\title{Example document}

\author{owen-lynch}

\import{macros} % the localcharts macro library

\date{1970-01-01}

% A comment

\p{A paragraph with inline math: #{a+b}, and then display math}

##{\int_a^b f(x)}

\p{\strong{bold} and \em{italics}}

\ul{

\li{a}

\li{bulleted}

\li{list}

}

\ol{

\li{a}

\li{numbered}

\li{list}

}

\quiver{

\begin{tikzcd}

A \ar[r, "f"] & B

\end{tikzcd}

}

\def\my-macro[arg]{Called \code{my-macro} with argument \arg}

\p{\my-macro{tweedle}}

\transclude{lc-0005} % a subsectionand would be rendered like this:

A paragraph with inline math:

bold and italics

- a

- bulleted

- list

- a

- numbered

- list

Called my-macro with argument tweedle

More stuff

Forester is written in .tree files in the trees/ directory. Trees are named namespace-XXXX, where XXXX is a number in base 36 (using digits 0-9 then A-Z), and namespace is typically something like the initials of the person writing.

Now that you've seen the overview, I encourage you to try writing a page. Instructions for setting up the localcharts forest are in the README for the localcharts forest. Once you've done that, you can come back here and learn forester in some more depth.

But before you go on, four things.

- There is a convenient script for making new trees numbered in base-36:

new <namespace>. For instancenew oclproduces a new filetrees/ocl-XXXX.tree, whereXXXXis the least strict upper bound of the set ofYYYYsuch thatocl-YYYYexists in the forest. We use this for most prose, but we also use theauthors/,institutions/, etc. directories to put bibliographic trees, named after their contents instead of automatically named. - If you have any questions or complaints about this tutorial, please comment below. If you have general questions about forester, I encourage you to join the mailing list.

- Don't be afraid to poke around at either the source code for localcharts or the source code for Jon Sterling's webpage; you may find some interesting features!

- Be aware that forester is new software in active development, and Jon Sterling is not afraid to make breaking changes. This is a blessing and a curse; he might write something which breaks things, but he also might write the feature that you really want if you ask nicely on the mailing list!

Forester has a lot of interesting features around large-scale organization of documents, but it's worth reviewing the nuts and bolts of basic typographical constructions first, so that you have tools in your toolbox for when we move to the large-scale stuff.

Forester's syntax is a little bit unfamiliar, however I hope by the end of this section, you will see that the design decisions leading to this were not unreasonable, and lead to a quite usable and simple language. This is because there is mainly just one syntactic construct in forester: the command. The syntax is \commandname{argument}, which should be familiar to anyone who has used LaTeX.

The most basic commands just produce html tags, as can be found in the table below.

| Name | HTML | Forester |

|---|---|---|

| Paragraph | <p>...</p> | \p{...} |

| Unordered list | <ul><li>...</li><li>...</li></ul> | \ul{\li{...}\li{...}} |

| Ordered list | <ol><li>...</li><li>...</li></ol> | \ol{\li{...}\li{...}} |

| Emphasis | <em>...</em> | \em{...} |

| Strong | <strong>...</strong> | \strong{...} |

| Code | <code>...</code> | \code{...} |

| Pre | <pre>...</pre> | \pre{...} |

| Blockquote | <blockquote>...</blockquote> | \blockquote{...} |

Note that unlike in markdown or LaTeX, paragraphs must be explicitly designated via \p{...}, rather than being implicit from newlines.

Also note the conspicuous absence of the anchor tag: we will cover links in .

It is also possible to output any other HTML tag via the \xml command. For instance, I used \xml{table}{...} to produce the table above.

So to sum up, basic typography in forester is just a LaTeX-flavored wrapper around HTML elements. Given that we have to use LaTeX for math (because it's too much effort to relearn how to typeset math euations), it makes sense to just have a single syntax throughout the whole document.

To produce inline mathematics, use #{...}. Math mode uses KaTeX, so anything supported in KaTeX will work in forester. Note that math mode is idempotent: #{a + b} produces the same result as #{#{a} + b}. Display mathematics can be made with ##{...}.

The killer feature of forester is the ability to include LaTeX figures as svgs. This is done by compiling the figure with the standalone package, and then running dvisvgm. The results are cached, so that when you make changes elsewhere in your document, the figures do not have to be recompiled.

You can access this feature with the \tex{...}{...} command. The first argument is the preamble, where you can put your \usepackage{...} statements. The second argument is the code for your figure, using TikZ or similar packages. The localcharts macro package (accessed with \import{macros}) also provides a command \quiver{...} which has the right preamble for copy-pasting quiver diagrams. Note that you must remove the surrounding \[...\] from the quiver export, or you will get weird LaTeX errors.

In this section, we discuss how to handle ideas that are not contained in a single file in quiver.

The easiest way to connect ideas is via links! Forester supports several different types of links. The simplest is markdown-style links, written like [link title](https://linkaddress.com). Because linking to the nlab is so common in localcharts, we also have a special macro \nlab{...} (when you have \import{macros} at the top of your file) for linking to nlab pages by their title, tastefully colored in that special nlab green like so: Double category.

Additionally, pages within the same forest can be referenced just by their tag, as in [Home page](lc-0001), or "wikilink style" with [[lc-0001]], which produces a link titled by the title of the referred page, like so: LocalCharts Forest. Note that internal links have a dotted underline. Moreover, on a given page

Transclusion includes the content of one file into another file. The basic command for transcludes is \transclude{namespace-XXXX}. This is similar to the LaTeX support for multi-file projects, but is used much more pervasively in forester. For instance, instead of a \section{...} command, the general practice is to make each section a separate .tree file, and have the larger document \transclude them.

This is also how mathematical environments are supported. What in LaTeX would be something like:

\begin{definition}

....

\end{definition}in forester is generally accomplished by creating separate .tree file with \taxon{definition} at the top. When transcluded, this looks like a definition environment. For example:

The discrete interval category

In general, \taxon{...} is used to designate the type of a tree, like definition, lemma, theorem, etc., but also non-standard ones like person, institute, reference. You can search for taxon in the LocalCharts forest to see how it is used.

Splitting up your writing between so many files can be a pain, but there is a reason behind it. The philosophy behind forester is that in order to pedagogically present mathematics, it is necessary to order the web of linked concepts in some logical manner. However, this order is non-canonical. Therefore, we should support multiple orderings.

From a more practical perspective, one gets tired at a certain point of clearing one's throat in the same manner every time one starts a new paper or blog post, reviewing similar definitions to get the reader up to speed. Being able to reuse parts of a document can alleviate this.

With transcludes, there is also yet another type of linking: a cleveref style \ref{...} command, which produces references like when the referenced item is contained within the current tree, and when the referenced item is not contained within the current tree.

Bibliographic citations are just trees with \taxon{reference} at the top. See here for examples of how to write these. Whenever a reference is linked to in a tree, the bibliographic information appears at the bottom of the page. For instance, I can link to Topo-logie and the formatted citation should appear at the bottom of this page.

One of the main reasons that I chose forester of all the ways of writing math on the web was its support for macros. It pains the soul to have to write \mathsf{Cat} all the time; I just want to write \Cat!

Macro definition is similar to LaTeX, but with two changes. The first change is that it uses \def instead of \newcommand. Recall that \def was the original syntax in TeX, but had some warts, so LaTeX had to change to \newcommand, so this is really just going back to the roots of TeX. The second change is that arguments are named instead of numbered. For instance, instead of

\newcommand\innerproduct[2]{\langle #1, #2 \rangle}you would use

\def\innerproduct[x][y]{\langle \x, \y \rangle}You can take a look at macros.tree for the LocalCharts "macro standard library". To use this in one of your trees, you must have \import{macros} at the top. Finally, note that the same macro definition works in prose, within #{...} or ##{...}, and also within \tex{...}{...}, so you can use the same math abbreviations in your inline equations and in your commutative diagrams, just like you would in a real LaTeX document.

You may have noticed that there are certain commands that tend to go at the top of documents, like \title{...}, \author{...}, etc. These are the frontmatter of the document, and provide metadata for the content.

Most frontmatter commands can be found here.

In this section, we document some other useful frontmatter commands not covered in the above.

One important command for bibliographical trees is \meta{...}{...}. This is used for many sorts of metadata, like doi, institution (used on author pages), external (used to provide an external url, such as a link to a pdf). Particularly important for localcharts is \meta{comments}{true}, which enables the discourse integration on a particular tree so that the tree is automatically crossposted the first time it is visited, and comments from the forum show up beneath it.

Another useful command is \tag{...}. This command is used to add "tags" to a page which might give some hints as to the subject matter. Tags are not displayed on the page, but pages can be queried by tag. This is very useful for producing bibliographies of references on a certain subject, as I did here.

That's all for now; it's time for you to go forth and write some math!

There are three sorts of errors in programming.

The first type of error isn't really an error, it's more of a "negative result to a query". For instance, the access method for a dictionary might return returns a Option value. The None case doesn't indicate that anything has failed per se, it just means that the key that you attempted to access doesn't exist in the dictionary. These "errors" are just part of normal programming.

The second type of error is when something external to your program breaks a contract. For instance, a user might make a syntax error in code passed to your compiler, or a server might send ill-formatted JSON in response to a query. This kind of error isn't necessarily recoverable, but it should be reported carefully as feedback to the external source of the error, especially if that source of error is a user. One might argue that part of the job of the program is to help the user abide by the contract, and detailed error reporting is part of that.

The third type of error indicates a serious mistake on the part of the programmer; the logical structure of the program is simply faulty. This error is unrecoverable by definition, because "recovering" from it implies rewriting the program itself, but it may be the case that it can be isolated and ignored by other parts of the program. In a server, this could mean that, say, a single thread crashes and the operation that the user was trying to do fails, but the database does not get corrupted and other operations still work. A healthy program should be robust to bugs in some parts of the codebase, and limit their scope of impact.

The second and third types of error are the same if you view the programmer as a user; it's as important for the programmer to provide themselves with feedback about the correctness of their own code as it is to provide the user with the correctness of their input to that code.

The traditional way that errors are managed in high-level programs is through exceptions. When you throw an exception, you get to "stop the program" in a way that gives a little information as to why the program stopped. And then the program prints out the "stack trace" of what it was currently doing.

But the problem with this is that typically the actual error is not described by the error message; the error could be a false assumption that happened at any part of the stack. For instance, we might assume that a key exists in a dictionary, and thus call a method that will error if the key isn't in the dictionary. If this errors, then we will get a "KeyError" exception or something printed out to the console. But what we won't get is the higher-level knowledge of why that KeyError happened, which is not available to the code throwing the error.

One way to fix this would be to surround all code which could error with try/catch blocks which rethrow any error found with some context about assumptions made in the current context. But this is kind of annoying to do, and additionally I'd end up with huge error types which were essentially linked lists.

What I really want is the ability to "annotate the stack trace." That is, I want to decorate a function call such that if that function call throws an error, that error keeps going, but when the stack trace prints out, at the stack frame of the function call it also shows the context I decorated the function call with. And perhaps also some other convenient annotations, like make it easy to say "also print out the arguments to this function call."

Come to think of it, when a function errors, I should be able to inspect all the local variables at any point in the stack. Somehow I feel like this is how in common lisp works; I know that when an error happens in common lisp it always prompts the ability to enter a debugging session.

Does anyone know about programming languages which have something like this?

I think that the biggest change in my approach to academic writing over the last couple years has been an increasing appreciation for citations, and tracking the history of ideas.

When I wrote my undergraduate thesis, citations were a chore. I would write what I want to write, and then retroactively search for relevant work to fulfill the obligation of citation. However, since then I've learned the value of reading bibliographies and following ideas backwards and forward (i.e. looking at what papers cite the paper I'm currently reading). When you are careful about citation, your writing becomes part of the larger conversation that takes place on the scale of centuries. People use citations to measure quality (just as google uses backlinks to organize search results), but the point of citations is not point-scoring, the point is maintaining the web of knowledge of humanity.

This isn't really supposed to be a revelation, just a thought I had that I wrote down. Probably should add more citations to this.

Before the internet, when producing a scientific artifact (i.e. a paper or book), you could not point to other artifacts directly. You had to instead give a lot of metadata (the title, authors, publisher, date, etc.) and hope that someone else could use that to track down your sources through the resources that they had access to.

With the internet came the ability to point precisely to artifacts, via DOIs. Additionally, via arXiv (or library genesis), we can reliably find artifacts in an instant. This means that following the web of citations is much, much easier than it used to be.

However, while we have improved the ability to find artifacts, the relationship between artifacts remains entirely informal. That is, information about the relationship exists only in the mind of the reader. For instance, if I build a climate model and say that the ocean component is due to (Weber, 1992), this could actually mean a whole variety of things. I could be using the same mathematical model with a whole new implementation, I might be using some existing implementation techniques with a new mathematical model, I could just be inspired by what I read and then just have made something completely different.

To understand this, I want to use the following metaphor. Biological reproduction often involves some sort of "bottleneck." For instance, large trees reproduce via small seeds; the whole genetic information is passed through the seed, and then is recreated as a tree again on the other side.

In scientific communication, there is a similar bottleneck: human understanding. One human might build all sorts of formal structures around an idea, test it rigorously in experiments, write software around it, etc. But in order for that idea to reproduce, it goes through a strange bottleneck: it is committed to paper (or digital paper), and then read by another human, who must recreate in their mind and in their lab all of the formal structures that the first human came up with.

Now, I would argue that this process of transmission is very important for the evolution of ideas. It is a lossy process, but it also encourages productive simplification. One must separate the particulars of a given situation from the general insight that should be conveyed to others.

However, it is a bottleneck. Contrast this situation to the one for software engineering. In software engineering, we can directly use each other's work: we can depend on packages written by other people! Now, sometimes this can encourage bloat because it might be easier to add 100 megabytes of dependencies rather than write 100 lines of code. Still, with the power of other people's code, I can do some absolutely incredible things!

So what would it look like to be able to depend on the scientific models of others in a formal sense, not just in the informal sense? I.e., how can we make an alternative pathway for scientific ideas to reproduce?

Well, one way to do this would be to enlarge the scope of scientific artifacts to include formal specifications of models in addition to their informal writeups. The ML community has something like this via Papers With Code. But the problem with just publishing arbitrary code along with your paper is that in fields other than ML, there are many things that one might want to do with a model other than simply "execute" it; in fact there might not even be a natural notion of "execute". For instance, in epidemiology, your model might be an ordinary differential equation. What other people would want to depend on is not the specific ODE solver that you chose to use, but rather the differential equation itself. If we can depend on the differential equation as a formal object, then we could, say, compose it with other differential equations, or write an algorithm to learn its parameters, or compare it (i.e. take a "diff") with another differential equation. These affordances depend on a formal notion of scientific model that goes beyond just "some python scripts in a github repo."

What is that formal notion of scientific model? The answer is that there is never a single universal way of formalizing things. Just like in software, we have to make design decisions, and sometimes those design decisions lead to incompatibilities, and we have to go back to "transmission via human understanding." But hopefully via category theory we can pin down fairly large classes of formal models, and be able to formally translate between them, so that this "alternative pathway" can be viable in more circumstances, and the links in the human web of knowledge can start to have real teeth.

WYSIWYG interfaces are far more intuitive and popular among laypeople than text-based formats like LaTeX and markdown. Why haven't they just completely won; why is almost every mathematics paper written in LaTeX rather than Word?

One reason is that the informational needs of the author of a document are different from the informational needs of a reader. In good typography, the reader shouldn't notice the details! For instance, boxes used to layout the text should be invisible, and internal reference names (i.e. \label{thm:foo}) should not be exposed. However, the explicit structure of the document should be very easy to read for the author. In other words, the affordances of "digesting" and the affordances of "editing" lead to different user interface designs.

As a sub-problem to this, when I am authoring, it drives me crazy if the mapping from what is on disk to what I can see is non-injective. For instance, I can't see the difference between an italicized word, and an italicized word+space in Word, but it's trivial to spot the difference between \em{this } and \em{this}.

Another reason, connected to the first but slightly different, is that in order to maintain stylistic consistency, the authoring format should support a separation of content and style. For instance, when I write math, I make macros for all of my mathematics notation. For instance, I make a macro \Set for the category of sets, which prints out as

This also extends to document structure. Often, we want a consistent length used for, say, interparagraph spacing. In a WYSIWYG editor, it's impossible to tell the difference between two lengths which are coincidentally the same, and two lengths which are by definition the same, in the sense that they are determined by both referencing the same variable.

This was an after-hours seminar on double categories presented by Kevin and Evan, that we collaboratively took notes on via visual studio code liveshare. The name is just for fun.

In category theory, we are often interested in enriched categories and internal categories.

Defining enriched categories requires some choice of monoidal category

Suppose that

An internal category in

in

such that

and

A small category is a category internal to Set.

A Baez-Crans 2-vector space is a category internal to Vect.

Suppose that

In a preorder, an internal category is just an isomorphism. This is because once we pick

If the object

A locally small strict double category

In more detail, a double category has

- a category

\mathbb {D} _0 of objects, - a category

\mathbb {D} _1 of morphism - a functor

\odot \colon \mathbb {D} _1 \times _{ \mathbb {D} _0} \mathbb {D} _1 \to \mathbb {D} _1 called external composition.

Notation:

However... because

For instance, we have a squares of the form

But then we need coherence conditions for these natural isomorphisms. The biggest one is the pentagon identity

Every pseudo double category

Proof is analogous to the proof of the coherence theorem for monoidal categories.

However, it may not be very "natural" to work with the strictification of a given pseudo double category. So we normally work with pseudo double categories, but pretend that they are strict double categories.

- Objects are sets

X \in \mathsf { Set } - Tight maps are functions

f \colon X \to Y - Loose maps are spans

X \leftarrow M \to Y , which are composed by\usepackage {quiver, amsopn, amssymb, mathrsfs} \begin {tikzcd} && {M \mathbin { \odot } N} \\ & M && N \\ X && Y && Z \arrow [from=2-2, to=3-1] \arrow [from=2-2, to=3-3] \arrow [from=2-4, to=3-3] \arrow [from=2-4, to=3-5] \arrow [from=1-3, to=2-4] \arrow [from=1-3, to=2-2] \arrow [" \lrcorner "{anchor=center, pos=0.125, rotate=-45}, draw=none, from=1-3, to=3-3] \end {tikzcd} - 2-cells are diagrams of the form

\usepackage {quiver, amsopn, amssymb, mathrsfs} \begin {tikzcd} {X_1} & {M_1} & {Y_1} \\ {X_2} & {M_2} & {Y_2} \arrow [from=1-1, to=2-1] \arrow [from=1-3, to=2-3] \arrow [from=1-2, to=2-2] \arrow [from=1-2, to=1-1] \arrow [from=1-2, to=1-3] \arrow [from=2-2, to=2-3] \arrow [from=2-2, to=2-1] \end {tikzcd}

- Objects are sets

X \in \mathsf { Set } - Arrows (tight morphisms) are functions

f \colon X \to Y - Proarrows (loose morphisms) are relation

R \subseteq X \times Y - A 2-cell of the shape

\usepackage {quiver, amsopn, amssymb, mathrsfs} \begin {tikzcd} X & Y \\ W & Z \arrow [""{name=0, anchor=center, inner sep=0}, "R", from=1-1, to=1-2] \arrow [""{name=1, anchor=center, inner sep=0}, "S"', from=2-1, to=2-2] \arrow ["f"', from=1-1, to=2-1] \arrow ["g", from=1-2, to=2-2] \arrow [shorten <=4pt, shorten >=4pt, Rightarrow, from=0, to=1] \end {tikzcd} x \in X ,y \in Y ,R(x,y) \Rightarrow S(f(x), g(y)) - The composition of two relations

R:X \nrightarrow Y,S:Y \nrightarrow Z is the relationx R \mathbin { \odot } S z \Leftrightarrow \exists y x R y,ySz

There is a correspondence between the double category of relations and logic, which goes

- types are sets

- terms/function symbols are functors

- predicates/relation symbols are relations

- entailment is implications

Let

- Objects: sets

- Arrows: functions

- Proarrows

M:X \nrightarrow Y areX \times Y -indexed familiesM(x,y) \in \mathcal {V} . - Cells are natural transformations, if we think of

X,Y as discrete categories, or justX \times Y -indexed families of morphisms of\mathcal {V} .

In order to compose 2-cells horizontally, you use the universal property of the coproduct.

Identities are given by

In this note, I record an alternative way of constructing the cofree comonoid for a polynomial

For a polynomial

Let

Then for any

This presheaf satisfies a Segal condition, which basically means that a length-

Thus,

I then claim that if we quotient

To construct this equivalence, send an element

We then conjecture that we should be able to tell a similar story for "smooth coalgebras" of vector bundles, where a smooth coalgebra of